SINGAPORE (Feb 16): Scientists from Nanyang Technological University (NTU) have developed an ultrafast high-contrast camera that could help autonomous vehicles and drones “see” better in extreme road conditions and bad weather.

Unlike regular optical cameras, NTU’s Celex will not be blinded by bright light and is able to make out details in the dark. It is also able to record the slightest movements and objects in real time.

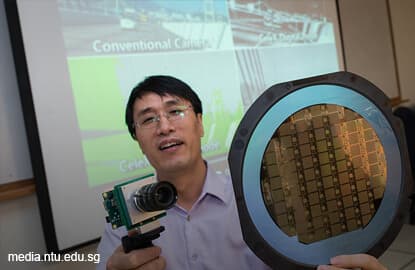

Developed by Assistant Professor Chen Shoushun from NTU’s School of Electrical and Electronic Engineering, Celex is now in its final prototype phase.

According to NTU, the prototype had received positive feedback when it was unveiled last month at the 2017 IS&T International Symposium on Electronic Imaging in the US.

With keen interest from the industry, Chen and his researchers have spun off a startup company, Hillhouse Tech, to commercialise the new camera technology.

Chen says they are already in talks with global electronic manufacturers, and expects the new camera to be commercially ready by the end of this year.

“Our new camera can be a great safety tool for autonomous vehicles, since it can see very far ahead like optical cameras, but without the time lag needed to analyse and process the video feed,” Chen says.

“With its continuous tracking feature and instant analysis of a scene, it complements existing optical and laser cameras and can help self-driving vehicles and drones avoid unexpected collisions that usually happen within seconds,” he adds.

The new camera records the changes in light intensity between scenes at nanosecond intervals — much faster than conventional video — and stores the images in a data format that is many times smaller.

With a unique in-built circuit, the camera can do an instant analysis of the captured scenes, highlighting important objects and details.

Since research into the sensor technology started in 2009, it has received S$500,000 in funding from the Ministry of Education Tier 1 research grant and the Singapore-MIT Alliance for Research and Technology (SMART) Proof-of-Concept grant.